TL;DR: The EU AI Act 2026 creates the world’s first comprehensive AI regulation, forcing AI tool providers to comply with strict safety, transparency, and accountability standards or face fines up to €35 million.

What Is the EU AI Act 2026?

The EU AI Act is Europe’s groundbreaking legislation that went into full effect in August 2026, establishing the world’s first comprehensive legal framework for artificial intelligence. It categorizes AI systems by risk level and imposes specific obligations on providers, from basic transparency requirements to outright bans on certain applications.

Think of it as Europe’s answer to GDPR, but for AI. Just as GDPR transformed how companies handle personal data globally, the AI Act is reshaping how AI tools operate, not just in Europe but worldwide. Any AI company serving European users must comply, making this regulation effectively global in scope.

How the EU AI Act Works in Practice

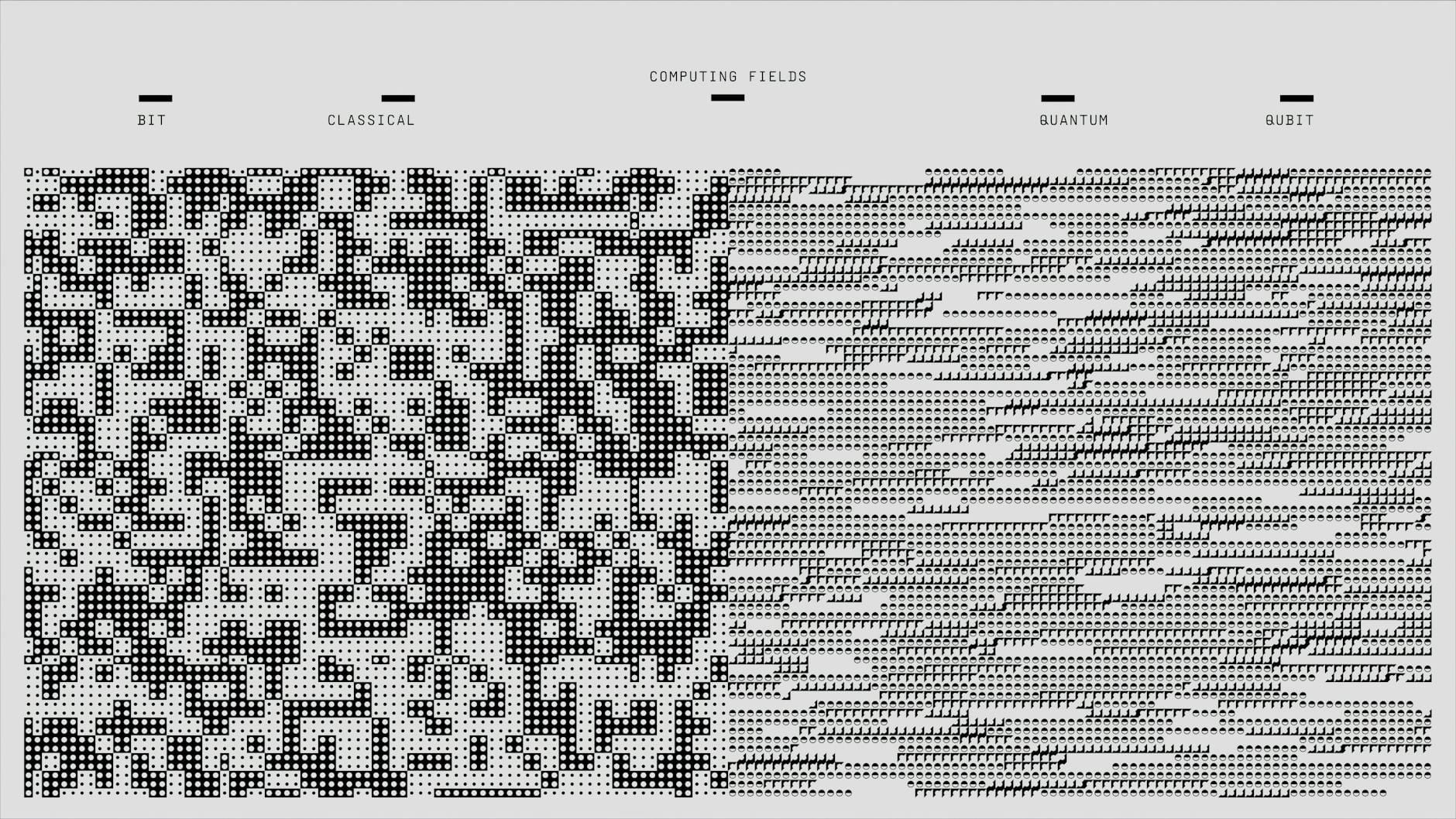

The Act uses a risk-based pyramid approach with four categories. At the bottom, “minimal risk” AI systems like spam filters face basic transparency requirements. “Limited risk” systems like chatbots must clearly disclose they’re AI-powered. “High-risk” AI tools used in critical areas like hiring, healthcare, or education face extensive testing, documentation, and monitoring requirements.

At the top, “unacceptable risk” systems are banned entirely. This includes AI for social scoring, emotion recognition in workplaces, and predictive policing based on profiling.

Here’s a real example: → Try Frase, an AI-powered content optimization tool, now displays clear AI disclosure notices and maintains detailed logs of its content generation processes to comply with EU transparency requirements. The company invested over $2 million in compliance infrastructure during 2025-2026.

Why the EU AI Act Matters Right Now

The Act’s full enforcement began in August 2026, but its impact started much earlier. Major AI providers spent 2025 restructuring their products, and many smaller tools either withdrew from European markets or underwent expensive compliance overhauls.

The regulation matters because Europe represents 27% of the global AI market. When the EU sets standards, the world follows — just like we saw with GDPR’s global privacy impact. Companies like OpenAI, Google, and Anthropic have already modified their AI models globally to meet EU requirements rather than maintaining separate versions.

For AI tool users, this means more transparency but potentially higher costs. Compliance expenses are being passed to consumers, with many AI tools increasing European pricing by 15-25% in 2026. However, users also get stronger protections against biased algorithms and clearer information about how AI systems make decisions.

EU AI Act vs. Other AI Regulations

The EU took the most comprehensive approach compared to other regions:

| EU AI Act | US Executive Order | UK AI Principles | China AI Regulations | |

|---|---|---|---|---|

| Scope | Comprehensive, legally binding | Federal agencies only | Guidelines, voluntary | Specific to algorithms |

| Penalties | Up to €35M or 7% revenue | Funding restrictions | Regulatory guidance | Service suspension |

| Risk Classification | 4-tier pyramid system | Sector-specific | Principles-based | Content-focused |

| Global Impact | High (Brussels Effect) | Medium | Low | Regional |

The EU’s approach is the most aggressive, creating binding legal obligations with severe financial penalties. This contrasts sharply with the US’s more hands-off approach and the UK’s principles-based framework.

What This Means for You

If you’re a business using AI tools: Audit your current AI stack immediately. Document which tools process EU citizen data and verify their compliance status. Many popular tools like our reviewed AI SEO tools have updated their terms and pricing to reflect EU compliance costs.

If you’re an AI tool provider: The compliance burden is substantial. You’ll need legal review, technical documentation, risk assessments, and ongoing monitoring systems. Budget 20-30% of development costs for compliance if serving EU markets.

If you’re creating AI-generated content: Tools like → Try Pictory now require clear disclosure when content is AI-generated for EU audiences. This affects marketing materials, social media posts, and any content that could influence European consumers.

The bottom line: factor compliance costs into your AI tool budget and expect more transparency but higher prices for AI services in 2026.

FAQ

What is the EU AI Act in simple terms?

It’s Europe’s law that regulates AI systems based on their risk level, requiring everything from basic transparency to extensive testing and documentation.

How is the EU AI Act different from GDPR?

GDPR focuses on personal data protection, while the AI Act regulates AI system behavior, safety, and transparency regardless of whether personal data is involved.

Do US companies need to comply with the EU AI Act?

Yes, if they offer AI services to EU residents or their AI systems are used in the EU market.

What are the penalties for non-compliance?

Fines up to €35 million or 7% of global annual revenue, whichever is higher, plus potential service bans in EU markets.

Which AI tools are banned under the Act?

Social scoring systems, workplace emotion recognition, predictive policing based on profiling, and AI systems that manipulate human behavior through subliminal techniques.

Bottom Line

The EU AI Act 2026 represents the most significant AI regulation in history, fundamentally changing how AI tools operate globally. While compliance costs are driving up prices, users benefit from greater transparency and protection against harmful AI applications.

For businesses, the message is clear: audit your AI tools now and budget for compliance costs. The “wild west” era of AI development is over — regulated, accountable AI is the new standard. Companies that adapt quickly will maintain competitive advantage, while those that ignore compliance risk expensive penalties and market exclusion.